PS6000XV MPIO and Disk Read Performance Problems Again!

A Quick question before even going into the following:

A Single Equallogic Volume IS LIMITED TO 1Gbps bandwidth ONLY at Max? (ie, The volume won’t send/receive more than 125MB/sec even there are MPIO NICs and iSCSI sessions connected to it) Does this apply to a single volume within just one member or it can break the 125MB/sec limit if the volume spans across 2 or more members? (for example 250MB/sec if the volume is spread over 2 members)

Summary (2 Problems Found)

a. PS6000XV MPIO DOESN’T work properly and limited to 1Gbps on 1 Interface ONLY on Server (initiator side)

b. 100% Random Read IOmeter Peformance is 1/2 of 100% Random Write

Testing Environment:

a. iSCSI Target Equallogic: PS6000XV 1 array member only, loaded with Latest Firmware 5.0.2 and HIT 3.4.2, configured as RAID10. (16 600GB SAS 15K Disks), HIT Kit and MPIO is installed properly, in MPIO, MSFT2005iSCSI BusType_0×9 is showing besides EQL DSM.

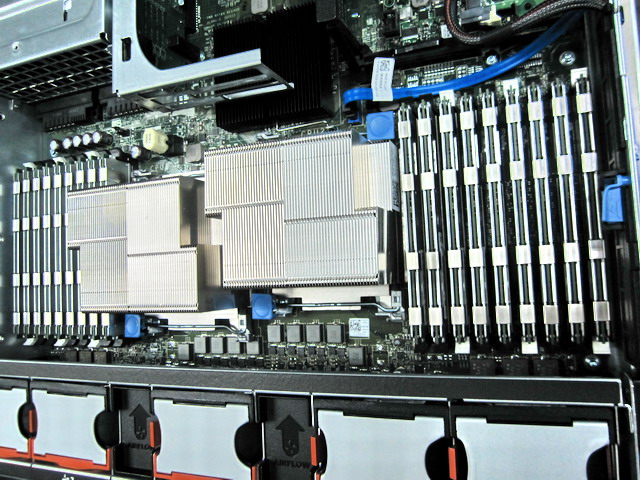

b. iSCSI Initiator Server: Poweredge R610 with latest firmware (BIOS, H700 Raid, Broadcom 5709C Quad, etc)

c. iSCSI Initiators: Using two Broadcom 5709C (one from LOM, one from add-on 5709C Quadcard), using Microsoft

software iSCSI Initiator (not Broadcom hardware iSCSI Initiator mode that is), No Teaming (I didn’t even install Broadcom’s teaming software as I want to make sure the teaming driver doesn’t load into Windows), I’ve also disabled all Offload features, as well as disable RSS and Mode Interruption, I have enabled Flow Control to “TX & RX”, as well as set Jumbo Frame MTU to 9000 (log in EQL group manager event that the initiator is indeed connecting using Jumbo Frame), each NIC has a different IP in the same sub-net as the EQL group IP.

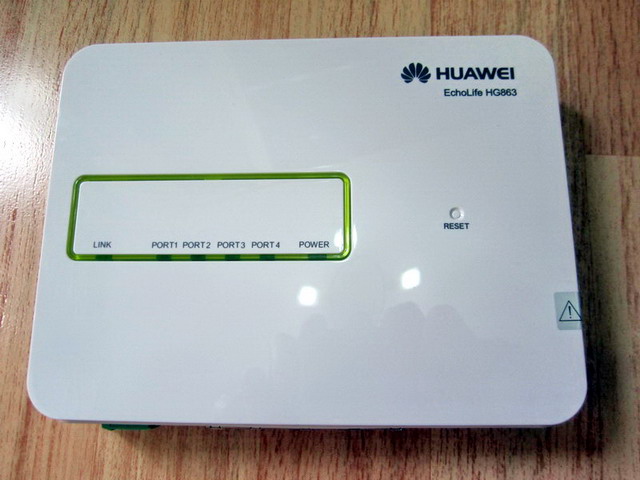

d. Switches: Redundant PowerConnect 5448, setup according to the best practice guide, Enabled Flow Control, Jumbo Frame, STP with Fastports, LAG, Seperate VLAN for iSCSI, disabled iSCSI Optimization and tested redundancy is working fine by unplug different ports and switch off 1 of the switch, etc.

e. IOMeter Version: 2006.07.27

f. Windows Firewall has been turned off for Internal Network (ie, Those two Broadcom 5709C NICs sub-net)

g. There is no error at all showing after a clean reboot.

h. Created two Thick volume (each 50GB) on EQL and assigned iqn permission to the above two NICs iSCSI name.

Using HIT kit, we define MPIO to “Least Queue Depth”, even with just one member, we want to increase the iSCSI session to volumes on that member, so we also set Max sessions per volume slice to 4 and Max sessions per entire volume to 12. So right away we see the two NICs/iSCSI initiators connects volume as 8 paths (2 paths for each NICs to a volume x 2 NICs x 2 volumes)

IOMeter Test Results:

2 Workers, 1GB test file on each of the iSCSI volume.

a. 100% Random, 100% WRITE, 4K Size

- Least Queue Depth is working correctly as all Interface is showing different MB/sec.

- IOPS is showing impressive number over 4000.

b. 100% Random, 100% READ, 4K Size

- Least Queue Depth DOESN’T SEEM TO work correctly as all Interface is showing equal/balanced MB/sec. (lOOKS Like Round Robin to me, but the policy has been set to Least Queue Depth)

- IOPS is showing 2000, which is 1/2 of Random’s IPOS 4000, STRANGE!

c. 100% Sequential, 100% WRITE, 64K Size

- Least Queue Depth is working correctly as all Interface is showing different MB/sec.

d. 100% Sequential, 100% READ, 64K Size

- Least Queue Depth DOESN’T SEEM TO work correctly as all Interface is showing equal/balanced MB/sec. (lOOKS Like Round Robin to me, but the policy has been set to Least Queue Depth)

All of the above test (a to d), the 4 EQL Interface reached total of 120MB/s ONLY, somehow it’s FIXED to one NIC on R610 only and MPIO didn’t kick in even I waited for 5 mins, so all the time there is only one NIC participating in the test, I was expecting 250MB/s with 2 NICs as there are 8 iSCSI sessions/path to two volumes.

I even tried to disable the active iSCSI NIC on R610, as expected the other standby NIC kick in immedaitely without dropping any packets, but I just can’t get BOTH NICs to load-balance the total thoughput, I am not happy with 120MB/sec with 2 NICs. I thought Equallogic will load balance iSCSI traffic between connected iSCSI initiator NICS.

SAN HQ reports no retranmit error at all, always below 2.0%, one error found though saying one of the EQL interface is saturated at 99.8% sometimes. (is this due to least queue depth?)

Findings (again 2 Problems Found)

a. PS6000XV MPIO DOESN’T work properly and limited to 1Gbps on 1 Interface ONLY on Server (initiator side)

b. 100% Random Read IOmeter Peformance is 1/2 of 100% Random Write

I read somewhere on Google saying EQL’s limit on each volume is 125MB/s:

“Though the backend can be 4 Gbps (or 20 Gbps on PS6×10 series), each volume on the EqualLogic can only have 1 Gbps capacity. That means, your disk write/read can go no more than 125 MB/s, no matter how much backend capacity you have.”

“It turns out that the issue was related to the switch. When we finally replaced the HP with a new Dell switch we were able to get multi-gigabit speeds as soon as everything was plugged in.”

and I don’t think there is anything wrong with the switch setting as we also connect two other R710 using VMware and we constant seeing 200MB+, so there must be some setting problem on R610.

Could it be:

a. Set MPIO policy back to Round Robin will effectively use the 2nd NIC (path)?

b. Any setting need to be changed on Broadcom NIC’s Advanced setting? Enable RSS and MOde Interrupt again?

Anyone? Please kindly advise, Thanks!

(Note: Equallogic CONFIRMED THERE IS NO SUCH 1Gbps limit per volume)

Update:

Something changed….for good finally!!!

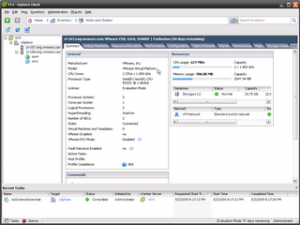

After taking a shower, I’ve decided to change my IOmeter to VM instead of using physical machine.

FYI, I’ve install MEM and upgraded FW to 5.0.2, I think those helped!

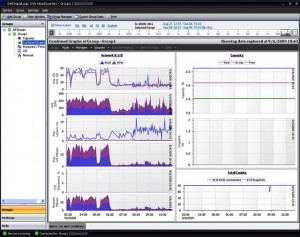

1st time in history on my side!!! It’s FINALLY over 250MB during 100% Seq. Write, 0% Random and over 300MB during 100% Seq. Read, 0% Random.

1. Does IOPS looks good? (100% RAMDOM, 4K Write is about 4,500 IOPS and Read is about 4,000 IOPS 1 Member only PS6000XV)

2. Does the throughput look fine? I can increase more worker to get it to the peak 400MB/sec, 300MB/sec for Read and 250MB/sec for Write currently

Now if the above 1-2 are all ok now, then we only left with 1 Big Question.

Why doesn’t this work in physical Windows Server 2008 R2? The MPIO load-balancing never kick-in somehow, only failover worked.

Solution FOUND! (October 8, 2010)

C:\Users\Administrator>mpclaim -s -d

For more information about a particular disk, use ‘mpclaim -s -d #’ where # is t

he MPIO disk number.

MPIO Disk System Disk LB Policy DSM Name

——————————————————————————-

MPIO Disk1 Disk 3 LQD Dell EqualLogic DSM

MPIO Disk0 Disk 2 FOO Dell EqualLogic DSM

That’s why, somehow the testing volume Disk 2 has been set with a LB policy as Fail Over Only, no wonder it’s always using ONE-PATH ALL THE TIME, after I’ve changed it to LQD, everything works like a champ!

More Update (October 9, 2010)

Suddently it doesn’t work again last night after rebooting the server, this is so strange, so I doubl checked switch setting, all the Broadcom 5709C Advanced Setting, making sure all offload are turned off and Flow Control has been set to TX only.

Please also MAKE SURE under Windows iSCSI Initiator Properties > Dell Equallogic MPIO, all of the active path under “Managed” is showing “Yes”, I had a case 1 out of 4 path was showing “No”, then somehow I can never get the 2nd NIC to kick in and I also received very bad IOmeter result as well as the FALSE annoying warning of “Priority ”Estimated

Time Detected” Duration Status Alerts for group your_group_name

Caution 10/09/2010 09:54 4 m 0 s. Cleared Member your_member_name TCP retransmit percentage of 1.7%. If this trend persists for an hour or more, it may be indicative of a serious problem on the member, resulting in an email notification.”

Update again October 9, 2010 10:30AM

Case not solved, still having the same problem, contacting EQL support again.

More Update (October 25, 2010)

I’ve found something new today.

I found there is always JUST ONE NIC taking the load in all of the following 1-9.

No matter if I install HIT Kit, with/without EQL MPIO or just simply Microsoft MPIO, still just 1 NIC all the time.

1. I’ve un-installed HIT kit as well as MPIO, then reboot.

2. Test IOmeter, single link went up to 100%, NO TCP-RETRANSMIT.

3. Install latest HIT Kit (3.4.2) again (DE-SELECT EQL MPIO, this is the last option, as I suspect there is some conflicts, and I will install it later at Step 5), Test IOmeter 2nd time, single link went up to 100%, NO TCP-RETRANSMIT.

4. Install Microsoft MPIO, reboot, MSFT2005iSCSIxxxx is installed correct and showing under DSM, and NO EQL DSM device found as expected. Test IOmeter 3rd time, single link went up to 100%, NO TCP-RETRANSMIT.

5. Under MPIO, found there is no EQL DSM device, so I re-install HIT kit again (ie, Modify actually) with the last option MPIO select (that’s EQL MPIO right?), reboot the server.

6. Now Test IOmeter the 4th time, single link went up to 100%, 1% TCP-TRANSMIT!!!

(IT SEEM TO ME HIT KIT’S EQL MPIO IS CONFLICTING WITH MS MPIO something like that)

7. This time, I’ve uninstall HIT kit again leaving Microsoft MPIO there, before reboot, Test IOmeter 5th time, single link went up to 100%, STILL 1% TCP-RETRANSMIT.

8. Test IOmeter 6th time, single link went up to 100%, NO TCP-RETRANSMIT. with previous Microsoft MPIO installed, Now Re-install HIT Kit again with all option selected and Reboot.

9. After reboot, Now Test IOmeter the 7th time, single link went up to 100%, STILL 1% TCP-RETRANSMIT!!!

I GIVE UP literally for today only but at least I can say the MPIO is causing the high TCP-TRANSMIT and poor performance when using Veeam Backup SAN mode. I intend not to un-install HIT Kit and remove EQL MPIO PART ONLY, but leaving all other HIT KIT components selected, so at least I am not getting that horrible TCP-TRANSMIT thing which really affecting my server.

Btw, I don’t think it matters if one of my nic is LOM on-board and one of the nic is on Riser as I did disable one at a time (1st disable LOM, test IOmeter, then disable riser NIC, then test IOmeter) and run IOmeter, still getting 1-2% TCP-RETRANSMIT with HIT KIT (EQL MPIO installed), THAT IS ONLY 1 ACTIVE LINK WHILE OTHER LINK HAS BEEN DISABLED MANUALLY, I AM STILL GETTING TCP-RETRANSMIT.

So my conclusion is “So there must be a conflict between EQL MPIO DSM and MS MPIO DSM on Windows Server 2008 R2.”

I belive EQL HIT Kit MPIO contains or having A BUG THAT DOESN’T WORK WITH Windows Server 2008 R2 MPIO? I think all other W2K3 or W2K8 SP2 R2 ALL working fine, just not with W2K8 R2.

Finally, my single link iSCSI NIC is working fine, no more TCP-Transmit ever, speed is in 600-700Mbps range during Veeam Backup and it tops my E5620 2.4Ghz 4 cores CPU to 90%, so I am fine with that. (ie, Better a single working path than two non-working MPIO paths)

More Update (December 22, 2010)

So far I was able to try the followings.

1. I’ve updated Broadcom’s firmware to latest 6.0.22.0 from 5.x.

2. Then I’ve Re-installed (Modify, Added back) MPIO module from latest HIT.

3. I’ve run the 1st command mofcomp %systemroot%\system32\wbem\iscsiprf.mof, but not the 2nd as http://support.microsoft.com/kb/2001997 says the 2nd command mofcomp.exe %systemroot%\iscsi\iscsiprf.mof is actually related Windows Server 2003 and I am running Windows Server 2008 R2.

Still the same result, this time TCP RETRANSMIT is over 2.5%, still just one-link being used. (ie, no MPIO)

However, I discovered something new this time as well:

As soon as I decrease the Broadcom NIC’s MTU to 1500 from 9000 (ie, No Jumbo Frame) on Poweredge R610, TCP RETRANSMIT has been reduced to almost 0% (0.1%), but still no MPIO (ie, still just one-link being used)

So the conclusion to my finding is:

The TCP RETRANSMIT seemed related to Jumbo Frame setting now, any idea of where the possible problem could be?

If it’s the switch setting, then how come my VM on the same switch can load up to almost 460Mbps without any TCP retransmit?

Probably it’s still related to W2K8 R2 setting and HIT Kit’s MPIO conflicts.

More Update (December 23, 2010)

Today, I was able to test Broadcom iSCSI HBA Mode according to EQL’s insturction as well, particularly disabled NDIS mode, leaving iSCSI only, and also discover the target using specific NIC, then enabled MPIO.

Unfortunately, there is just still a single link even with iSCSI HBA Mode, strange!

One thing I noticed however is the CPU indeed decreased from 8% to 2%, but considering my CPU is rather powerful, the cost in CPU when using software iSCSI initiator can be ignored.

More Update (January 20, 2011)

With the help from local Pro-Support team, the MPIO mulfunction problem has been identified FINALLY! It’s due to the RRAS service running the on same server causing the routing problem which will somehow disable the 2nd path automatically and causing high TCP-Retransmit.

I was also able to get both NICs load to 99% for the whole IOMeter testing period with NO TCP-RETRANSMIT after disabled RRAS Service, first time in 3 months! ![]()

More Update (January 21, 2011)

More findings from local Pro-Support team:

The issue has nothing to do with EQ, below is my testing:

I tried to capture package of ICMP with WireShark.

iSCSI1 IP 192.168.19.28 metric 266

iSCSI2 IP 192.168.19.128 metric 265 ( all traffics go through this NIC )

Using a server 192.168.19.4 to ping 192.168.19.28, from WireShark, monitor iSCSI1, it just shows REQUEST package, monitor iSCSI2, it shows REPLY package. That means an ICMP come in from iSCSI1 and come out from iSCSI2. It was routed by RRAS.

After disabled RRAS, it come in and out from iSCSI1.

I’m checking on how to disable this 2 NICs routing in RRAS. I’ll update you later. Thanks.

More Update (January 24, 2011) – PROBLEM SOLVED, IT WAS DUE TO RRAS CAUSING ROUTING PROBLEM TO MICROSOFT MPIO MODULE

More findings from local Pro-Support team:

After many times tested, It works in my lab by following setting.

1. Install HIT kit and connect to EQL.

2. Check the 4 paths corresponding to EQL 4 ports from 2 NICs.

3. In RRAS -> IPv4 -> Static Routes, add 4 entries by above 4 paths.

a) Netmask is 255.255.255.255

b) Gateway is IP of NIC.

c) Metric is 1

After setting, it resumes normal.

Reboot test, wait from RRAS start up. Check traffic, normal again.

1. Use Veeam’s great free tool FastSCP to upload the original.vmdk file (nothing else, just that single vmdk file is enough, not even vmx or any other associated files) to /vmfs/san/import/original.vmdk. In case you didn’t know, Veeam FastSCP is way faster than the old WinSCP or accessing VMFS from VI Client.

1. Use Veeam’s great free tool FastSCP to upload the original.vmdk file (nothing else, just that single vmdk file is enough, not even vmx or any other associated files) to /vmfs/san/import/original.vmdk. In case you didn’t know, Veeam FastSCP is way faster than the old WinSCP or accessing VMFS from VI Client.

As we found the EQL I/O testing performance is low, only 1 path activated under 2 paths MPIO and disk latency is particular high during write for the newly configured array.

As we found the EQL I/O testing performance is low, only 1 path activated under 2 paths MPIO and disk latency is particular high during write for the newly configured array. For the whole month, my mind is full of VMWare, ESX 4.1, Equallogic, MPIO, SANHQ, iSCSI, VMKernel, Broadcom BACS, Jumbo Frame, IOPS, LAG, VLAN, TOE, RSS, LSO, Thin Provisioning, Veeam, Vizioncore, Windows Server 2008 R2, etc.

For the whole month, my mind is full of VMWare, ESX 4.1, Equallogic, MPIO, SANHQ, iSCSI, VMKernel, Broadcom BACS, Jumbo Frame, IOPS, LAG, VLAN, TOE, RSS, LSO, Thin Provisioning, Veeam, Vizioncore, Windows Server 2008 R2, etc.

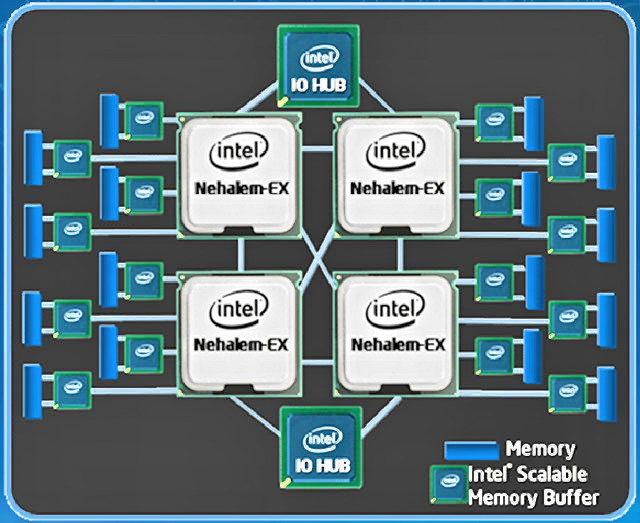

What about 3DPC? Old story applies, it’s 800Mhz, tested it and proved it and if populate with 3DPC and fully filled that 18 DIMMs (ie, 144GB), it will take twice the time to verify and boot the server, so it’s better not to as you lost 40% bandwidth is very important for ESX.

What about 3DPC? Old story applies, it’s 800Mhz, tested it and proved it and if populate with 3DPC and fully filled that 18 DIMMs (ie, 144GB), it will take twice the time to verify and boot the server, so it’s better not to as you lost 40% bandwidth is very important for ESX.